Alexa, Python and Kubernetes - a cookiecutter

Amazon Echo

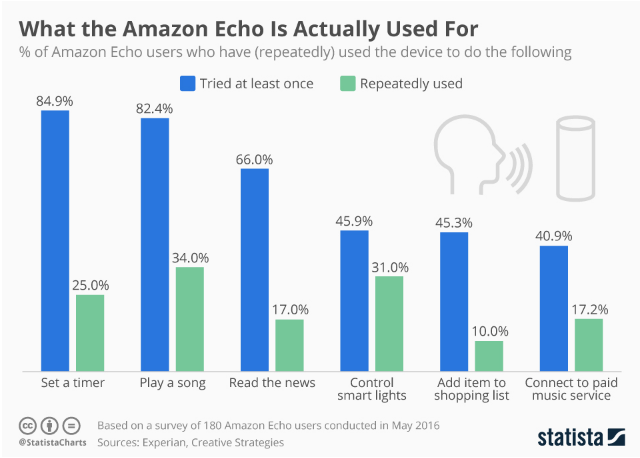

If you haven’t heard about Echo, it’s a new device that can be described as Siri in your living room, but actually getting used. And it does just that, listening to your voice and handling simple queries.

It’s supposed to have a seven-piece microphone array (that your phone doesn’t have) making it more accurate in the speech recognition, it’s always on and listening (making the interaction more frictionless) and has a great speaker. And perhaps being in the comfort of your own home - where talking to machines is somewhat less awkward - is another big reason behind it’s popularity.

Despite not having a great experience with it, I couldn’t resist the fun of developing for it. For now, I’m hoping that my unusual accent is the reason why we don’t get along that great, and next generations will improve.

Alexa skills

Also an advantage over Siri (although that’s changing), it that it has its own app store. Apps here are called “skills”. But you don’t need machine learning expertise to develop most skills, Amazon does the heavylifting for you.

The way it works is:

- the user will speak to an Alexa device (like Amazon Echo)

- Amazon does the speech recognition, intent classification and entity extraction, and calls our service while providing the processed speech (eg. the user says “what’s the weather in London”, and the service gets

{'intent': 'KnowWeather', 'entities': {'city': 'London'} - the skill will receive the nicely parsed data, and do its logic (eg. query a weather API for London) and send a response via an HTTP POST request to Amazon

- Echo converts the skill’s text response to speech.

Want to code an app for it in Python? Read:

- their getting started guide

- the web service docs (you can also implement a skill with Amazon Lambda - which I found very limitating - besides a web service)

- alexandra’s docs

Cookie cutter

After going through a number of issues getting an Alexa skill up and running properly in Python via Kubernetes, I thought I’d abstract the core setup in a cookie cutter. To get started:

1

cookiecutter https://github.com/n-batalha/cookiecutter-alexa-python

And continue with these instructions. It should take you from nothing to a working app in a real life Echo device in ~30 minutes.

The included skill is barebones, if that. For a small number of hardcoded ingredients, it provides an example replacement if you ask for it. Alexa, ask cook bot what does replace lemon?

TODO

What is not included, as of January 2017, is:

- proper logging (for exceptions and performance monitoring): hopefully someone will later do a Helm chart for Sentry and others to easily deploy these

- autoscaling, for both pods and the nodes

- some patterns of dialogues along with more examples of the API (with tests)

- CI/CD

- test environments

- integrated analytics

- local Kubernetes tests (currently it tests the app itself, but everything up from gunicorn is untested)

Comments